To usher this blog post in here's some news in French explaining how last week Astérix apparently overtook Interstellar and the new Hunger Games film in French cinema's. I feel like there's a whole lotta snowballing going on with this movie. Pretty awesome! I saw it Wednesday a week ago and it was pretty sweet. Also, Wiplala, a movie I never posted about, also premiered and although it's a children's movie spoken completely in Dutch.. it's actually pretty cool.

So specialisation wise, here we go again. I'm pretty much on schedule with it so far, though during the last few weeks have been a little bit busy if I'm honest. As the deadline was getting closer it kind of closed in a lot more than I had anticipated. Due to a fairly large misunderstanding between me, my supervisor and my old curricular terms I now have to complete this project on December 19th instead of Januari 9th... which is kind of a bummer! On the other hand, it did push me to take the next step instead of dwelling some more on my renders, so that's good I think!

Like previous blogposts I'll structurize this one into chapters so it's easier to read. So let's see where I left off... ah yes. Simulations.

Simulations

A bunch of, admittedly, very ugly renders of very ugly simulations. That's the first thing I changed. I upped the anti and scaled my realflow scene's with a factor x20 because I wasn't getting the right resolution. This gave me much better results at the cost of taking 7 to 8 hours of calculating the simulation.

Vorticity of the fluid.

Velocity of the fluid.

I then went on attempted to render these simulations in separate VRay scenes but quickly found that VRay actually didn't render this stuff very fast. The renders themselves also weren't very impressive quality wise as a lot of the refractions just seemed to be internal reflections. Something which I still don't completely understand but alas. Because of this I decided to switch to Mental Ray as I had been getting decent results rendering the limited amount of BiFrost fluid simulations I had previously created. This turned out to be a good decision as this allowed me to cut render times in half for double the resolution I was rendering at in VRay. A test result below:

The problem with this was that it took the color for refractions and reflections from lamberts I really quickly set up. I didn't think it was an accurate representation of what refractions would look like using the actual VRay shaders, so I transferred my VRay materials to Mental Ray. Arguably they could have looked much better than this if I had spent more time on them, but really all I needed was the base color and maybe some surface definition.

The tests using these shaders turned out to be much better than I had expected. Another one in the pocket and something less to worry about. Some results below.

Simulation 1

Simulation 2

Simulation 3

I am least satisfied with the first one as it almost seems like I had forgotten to turn up the liquids viscosity. Or maybe it's the gravity daemon I didn't turn up high enough in Realflow. I'll be returning to this simulation in the end but only if I have enough time to finish this short. That's including the documentation I have to write about workflow and partly pipeline. Fortunately, because I am writing all these blogs I'll have a lot to look back and reflect on. Hurrah!

Shading revisited

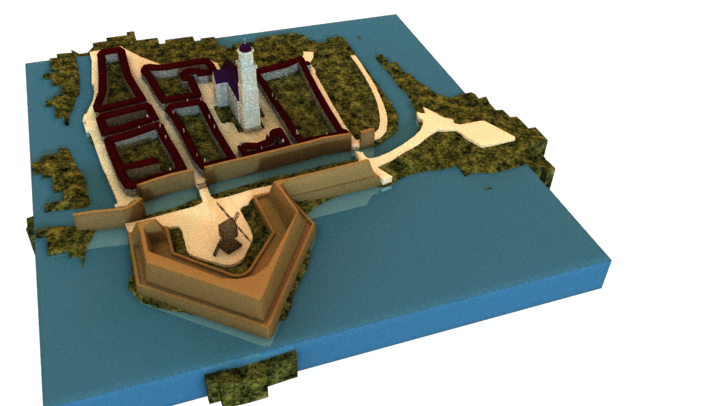

I was really unhappy with how my shading looked on the larger surfaces. Very undefined and really not fitting the style I wanted to go for. During one of several bi-weekly meetings with my supervisor we both agreed my maquette looked like something out of a clown horror movie and I decided to mash up all the shaders. Below are some images I stuck together to show the difference in both lighting conditions and shaders.

I also made the windmills turn in their opposing direction because it didn't feel natural to me. Might have been the lighting or the way I've set up the camera paths. I felt the windmills should have turned against the camera movement.

Final Pre-vis

Then, finally, before I could let myself start rendering I created playblasts of each shot and composed a short of them. Similar to my previous blog post I simply stitched the clips together using the same Sony Vegas template I used before, although this time I though it would be nice to make it more alive. This way it was also easier to understand what exactly was going on. It also helped guesstimate the rhythm of the clips so it wouldn't feel rushed.

The applause at the end is a joke, plz...

Rendering

After this step I spent some time setting up all my render scenes and render layers. For this I used several common setups like disabling the primary visibility for reflection meshes and adding a holdout material to a duplicate of those meshes, but with primary visibility enabled. This is a really neat trick and it turned out to render my stuff even faster. Next up was setting up some render layers in both Mental Ray and VRay. Fortunately I didn't need that many as I only wanted a shadow layer and a beauty layer.

I set up my renders in such a way that it would first output a beauty layer so I could start creating simple pre-comps. I was then planning on recreating my beauty pass, steal the alpha just in case and keep the whole comp script the same for every shot. This didn't work perfectly, but I'm sure I shaved a lot of time off by creating these comps before my renders were finished. Below are the results of some quick and dirty pre-comps.

Then, when the rendering was underway, I made a render sheet so I could calculate drive space and timely start new renders without losing much time due to bodily needs like sleeping, eating and visiting the toilet. That was last week and I feel like I'm still recovering from the 150+ hours of managing all my renders on two machines. I'm glad that stuff is over. Lessen learned. Next time I'm going to write a macro that simply just batches through all that stuff. Or use a render manager like Thinkbox's Deadline. Below is an image of my total render time. This excludes many of the tests and creation of irradiance and light caches.

To summarize. I've rendered out the following passes.

Mental Ray:

Alpha - Beauty - Diffuse - Reflection - Refraction - Shadow - Specular

VRay:

Alpha - Beauty - Diffuse - GI - Lighting - Normals - ObjectID - Reflections - Refractions - Shadows - Specular - Velocity - zDepth

After some thought I decided I'd render everything to a drive which from that point on would act as my plate drive. It's only 500GB's but for the current purpose that's more than enough. It's also extremely handy that it's a 2.5" external drive because this way I am not limited to my workstation. If, for a moment I don't feel like working at that location I simply move to my laptop. It's absolutely fabulous.

Compositing

Immediately after the first batch of passes were ready I spent some time setting up base comps for all the shots. For some this required just to gather all the render layers and merge them together to mimic the beauty. For the first and last shot this required me to export the original shot footage to a sequence plate that needed to be 24.97fps opposed to 29.97fps. At first this gave some issues, though with a short expression in combination with forcing it to calculate in integers I was able to cut out frames without seeing an actual difference in the footage.

Base comp to mimic the beauty. Another one of this is on the right for the fluid render passes.

A pre-comp to throw away all unnecessary information. At this point I am not using 32-bit float values for the image sequence I am exporting since that will take up too much time to write/read and I want to fly through this part of comping. For now the 16-bit .png format keeps the size small and maintains nearly all RGBA information. For the final image output I will go back and rerender all of this to 32-bit tiff's so I am not throwing away information that would otherwise increase the quality of this short.

This is an image from the presentation I gave yesterday to indicate what I've done in comp so far and what I am still planning on adding. During this presentation my supervisor and I came to the conclusion that the floor is reflective and that the maquette needed a reflections. I had not thought about that while rendering so I needed to faked one. I also added another global discoloration overlay to integrate the CG better into the scene and I used my normal pass to increase the lighting/shadows effects from both windows.

Something else entirely, GenArts is doing gods work with their plugins. I'm having a great time using their plugins and the only downside to 'em is that they rely heavily

on GPU acceleration, which is something the Nuke dedicated nodes do also. When

both are present in the same comp and both are being allowed to use the GPU (if

available) a mismatch occurs and the Sapphire plugins stop working. Not one of

them. All of them. I have yet to find a suiting solution to this issue. I've kind of circumvented this problem by recreating this effect using regular blur nodes. The difference will probably not be noticeable when the

camera is causing motion blur however, I will

need to find a proper solution for this because I am now missing camera lens information

like lens shaped artifacts, bokeh, lens noise and highlights. Below a comparison.

And after all of this I built something similar to the pre-comp above, only this is for the final touches, like grain, re-formatting, camera shaking and re-framing. I felt I didn't have enough processing speed left to make the final judgement and I definitely didn't want to do it in proxy mode as I would lose a lot of information right there. For this step I simply render the "final" sequence out to my plate drive and then import it again. This is also using 16-bit png's, but I'll be sure to upgrade to 32-bit tiff's once I feel the shot is ready to go.

2.35:1 anamorphic format

I'm considering reformatting the footage to a 2.35:1 anamorphic format since that will probably feel more cinematic than a short at 1.77:1 widescreen format, though I don't want to lose the 'home-video' vibe that's going on right now. It's something I can decide on later, so I'll postpone that decision for now. Right now, I just went ahead and did it anamorphic. Below is the result.

Livestreaming

I've been live-streaming on and off because of the amount of bandwidth it generates.. or better yet, consumes. The videos are only for a number of days available on Twitch, however it gives you an option to export your recordings to 2-hours chunks to YouTube if you link your account to it. So really what I'm saying is, all my work can be found on YouTube. This is also incredibly useful for when I having to write my documentation. Weeee!

That's it again. Lots and lots of text. Hope I didn't bore you. Thanks for reading,

Pim